Portkey provides a robust and secure gateway to integrate various Large Language Models (LLMs) into applications, including Mistral AI’s models. With Portkey, take advantage of features like fast AI gateway access, observability, prompt management, and more, while securely managing API keys through Model Catalog.Documentation Index

Fetch the complete documentation index at: https://docs.portkey.ai/docs/llms.txt

Use this file to discover all available pages before exploring further.

Quick Start

Get Mistral AI working in 3 steps:Tip: You can also set

provider="@mistral-ai" in Portkey() and use just model="mistral-large-latest" in the request.Add Provider in Model Catalog

- Go to Model Catalog → Add Provider

- Select Mistral AI

- Choose existing credentials or create new by entering your Mistral AI API key

- Name your provider (e.g.,

mistral-ai-prod)

Complete Setup Guide →

See all setup options, code examples, and detailed instructions

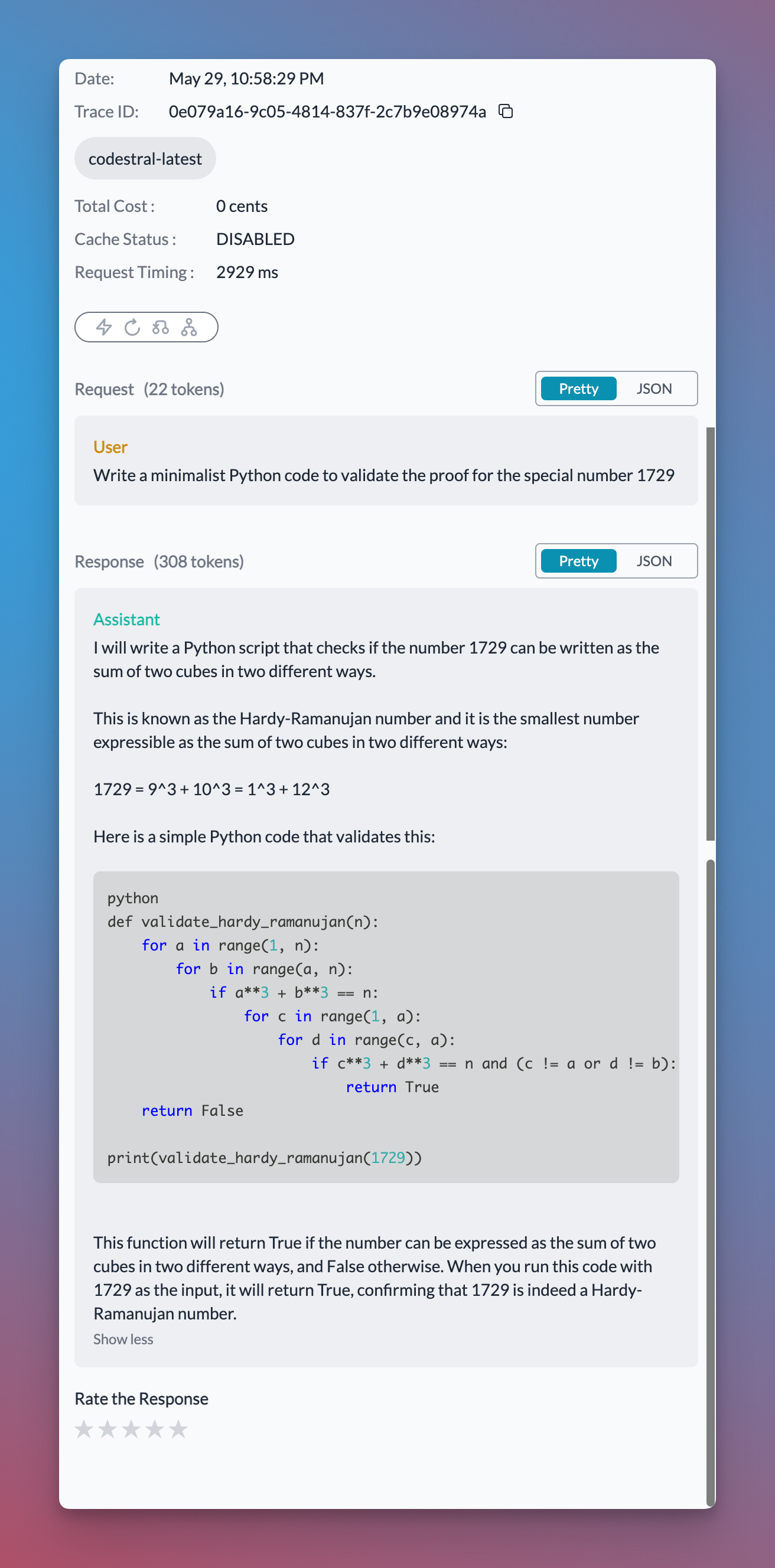

Codestral Endpoint

Mistral AI provides a dedicated Codestral endpoint for code generation. Use thecustomHost property to access it:

Structured Output (response_format)

Mistral AI supports structured output via theresponse_format parameter. You can enforce JSON schema validation for deterministic, structured responses:

Mistral Tool Calling

Tool calling lets models trigger external tools based on conversation context. You define available functions, the model chooses when to use them, and your application executes them and returns results. Portkey supports Mistral tool calling and makes it interoperable across multiple providers. With Portkey Prompts, you can templatize your prompts and tool schemas.Managing Mistral AI Prompts

Manage all prompt templates to Mistral AI in the Prompt Library. All current Mistral AI models are supported, and you can easily test different prompts. Use theportkey.prompts.completions.create interface to use the prompt in an application.

Next Steps

Add Metadata

Add metadata to your Mistral AI requests

Gateway Configs

Add gateway configs to your Mistral AI requests

Tracing

Trace your Mistral AI requests

Fallbacks

Setup fallback from OpenAI to Mistral AI

SDK Reference

Complete Portkey SDK documentation