Swarm is an experimental framework by OpenAI for building multi-agent systems. It showcases the handoff & routines pattern, making agent coordination and execution lightweight, highly controllable, and easily testable. Portkey integration extends Swarm’s capabilities with production-ready features like observability, reliability, and more.Documentation Index

Fetch the complete documentation index at: https://docs.portkey.ai/docs/llms.txt

Use this file to discover all available pages before exploring further.

Getting Started

1. Install the Portkey SDK

2. Configure the LLM Client used in OpenAI Swarm

To build Swarm Agents with Portkey, you’ll need two keys:- Portkey API Key: Sign up on the Portkey app and copy your API key.

-

Provider: Add your LLM provider in Model Catalog to get a provider slug (e.g.,

@openai-prod)

3. Create and Run an Agent

In this example we are building a simple Weather Agent using OpenAI Swarm with Portkey.E2E example with Function Calling in OpenAI Swarm

Here’s a complete example showing function calling and agent interaction:The current temperature in New York City is 67°F.

Enabling Portkey Features

By routing your OpenAI Swarm requests through Portkey, you get access to the following production-grade features:Interoperability

Call various LLMs like Anthropic, Gemini, Mistral, Azure OpenAI, Google Vertex AI, and AWS Bedrock with minimal code changes.

Caching

Speed up agent responses and save costs by storing past responses in the Portkey cache. Choose between Simple and Semantic cache modes.

Reliability

Set up fallbacks between different LLMs, load balance requests across multiple instances, set automatic retries, and request timeouts.

Metrics

Get comprehensive logs of agent interactions, including cost, tokens used, response time, and function calls. Send custom metadata for better analytics.

Logs

Access detailed logs of agent executions, function calls, and interactions. Debug and optimize your agents effectively.

Security & Compliance

Implement budget limits, role-based access control, and audit trails for your agent operations.

Continuous Improvement

Capture and analyze user feedback to improve agent performance over time.

1. Interoperability - Calling Different LLMs

When building with Swarm, you might want to experiment with different LLMs or use specific providers for different agent tasks. Portkey makes this seamless - you can switch between OpenAI, Anthropic, Gemini, Mistral, or cloud providers without changing your agent code. Instead of managing multiple API keys and provider-specific configurations, Portkey’s Model Catalog gives you a single point of control. Here’s how you can use different LLMs with your Swarm agents:- Anthropic

- Azure OpenAI

2. Caching - Speed Up Agent Responses

Agent operations often involve repetitive queries or similar tasks. Every time your agent makes an LLM call, you’re paying for tokens and waiting for responses. Portkey’s caching system can significantly reduce both costs and latency. Portkey offers two powerful caching modes: Simple Cache: Perfect for exact matches - when your agents make identical requests. Ideal for deterministic operations like function calling or FAQ-type queries. Semantic Cache: Uses embedding-based matching to identify similar queries. Great for natural language interactions where users might ask the same thing in different ways.3. Reliability - Keep Your Agents Running Smoothly

When running agents in production, things can go wrong - API rate limits, network issues, or provider outages. Portkey’s reliability features ensure your agents keep running smoothly even when problems occur.Automatic Retries

Request Timeouts

Conditional Routing

Fallbacks

Load Balancing

4. Observability - Understand Your Agents

Building agents is the first step - but how do you know they’re working effectively? Portkey provides comprehensive visibility into your agent operations through multiple lenses: Metrics Dashboard: Track 40+ key performance indicators like:- Cost per agent interaction

- Response times and latency

- Token usage and efficiency

- Success/failure rates

- Cache hit rates

Send Custom Metadata with your requests

Add trace IDs to track specific workflows:5. Logs and Traces

Logs are essential for understanding agent behavior, diagnosing issues, and improving performance. They provide a detailed record of agent activities and tool use, which is crucial for debugging and optimizing processes. Access a dedicated section to view records of agent executions, including parameters, outcomes, function calls, and errors. Filter logs based on multiple parameters such as trace ID, model, tokens used, and metadata.

6. Security & Compliance - Enterprise-Ready Controls

When deploying agents in production, security is crucial. Portkey provides enterprise-grade security features:Budget Controls

Access Management

Audit Logging

Data Privacy

7. Continuous Improvement

Now that you know how to trace & log your Llamaindex requests to Portkey, you can also start capturing user feedback to improve your app! You can append qualitative as well as quantitative feedback to anytrace ID with the portkey.feedback.create method:

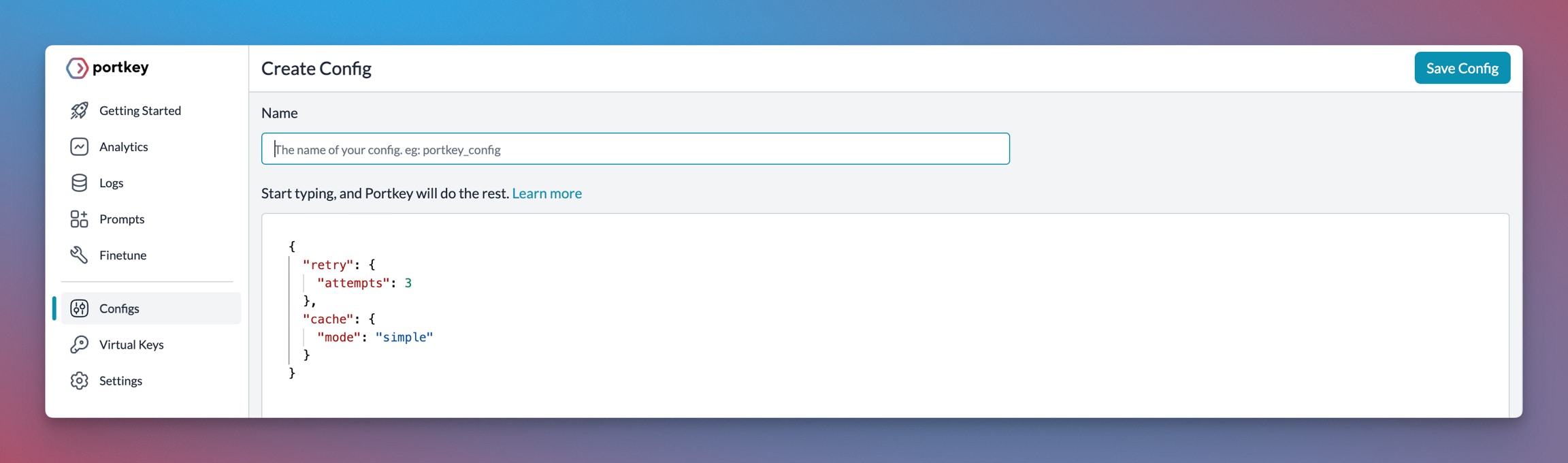

Portkey Config

Many of these features are driven by Portkey’s Config architecture. The Portkey app simplifies creating, managing, and versioning your Configs. For more information on using these features and setting up your Config, please refer to the Portkey documentation.