Portkey provides a robust and secure gateway to facilitate the integration of various Large Language Models (LLMs) into your applications, including your locally hosted models through LocalAI.

Portkey SDK Integration with LocalAI

1. Install the Portkey SDK

npm install --save portkey-ai

2. Initialize Portkey with LocalAI URL

First, ensure that your API is externally accessible. If you’re running the API on http://localhost, consider using a tool like ngrok to create a public URL. Then, instantiate the Portkey client by adding your LocalAI URL (along with the version identifier) to the customHost property, and add the provider name as openai.

Note: Don’t forget to include the version identifier (e.g., /v1) in the customHost URL

import Portkey from 'portkey-ai'

const portkey = new Portkey({

apiKey: "PORTKEY_API_KEY", // defaults to process.env["PORTKEY_API_KEY"]

provider: "openai",

customHost: "https://7cc4-3-235-157-146.ngrok-free.app/v1" // Your LocalAI ngrok URL

})

from portkey_ai import Portkey

portkey = Portkey(

api_key="PORTKEY_API_KEY", # Replace with your Portkey API key

provider="openai",

custom_host="https://7cc4-3-235-157-146.ngrok-free.app/v1" # Your LocalAI ngrok URL

)

Portkey currently supports all endpoints that adhere to the OpenAI specification. This means, you can access and observe any of your LocalAI models that are exposed through OpenAI-compliant routes.

3. Invoke Chat Completions

Use the Portkey SDK to invoke chat completions from your LocalAI model, just as you would with any other provider.

const chatCompletion = await portkey.chat.completions.create({

messages: [{ role: 'user', content: 'Say this is a test' }],

model: 'ggml-koala-7b-model-q4_0-r2.bin',

});

console.log(chatCompletion.choices);

completion = portkey.chat.completions.create(

messages= [{ "role": 'user', "content": 'Say this is a test' }],

model= 'ggml-koala-7b-model-q4_0-r2.bin'

)

print(completion)

- Navigate to Virtual Keys in your Portkey dashboard

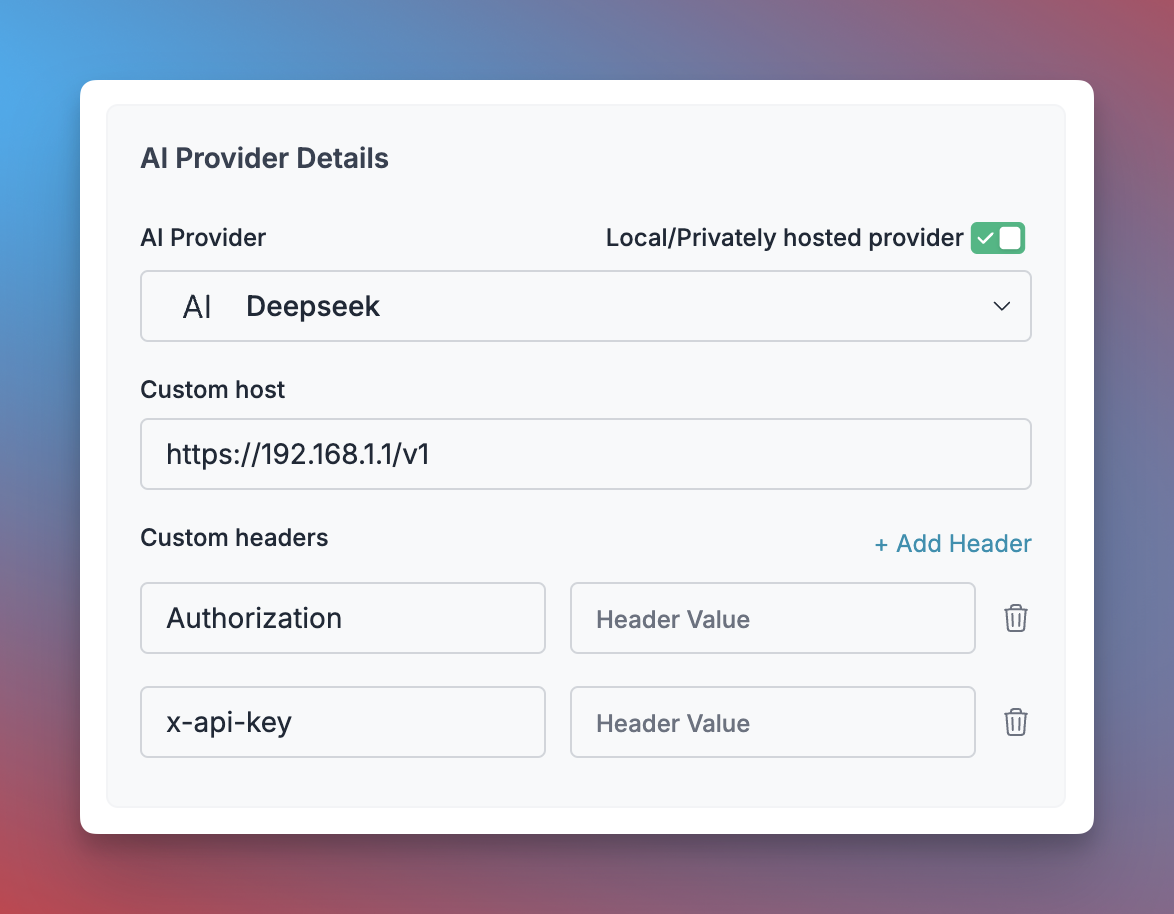

- Click “Add Key” and enable the “Local/Privately hosted provider” toggle

- Configure your deployment:

- Select the matching provider API specification (typically

OpenAI)

- Enter your model’s base URL in the

Custom Host field

- Add required authentication headers and their values

- Click “Create” to generate your virtual key

You can now use this virtual key in your requests:

const portkey = new Portkey({

apiKey: "PORTKEY_API_KEY",

virtualKey: "YOUR_SELF_HOSTED_LLM_VIRTUAL_KEY"

async function main() {

const response = await client.chat.completions.create({

messages: [{ role: "user", content: "Bob the builder.." }],

model: "your-self-hosted-model-name",

});

console.log(response.choices[0].message.content);

})

portkey = Portkey(

api_key="PORTKEY_API_KEY",

virtual_key="YOUR_SELF_HOSTED_LLM_VIRTUAL_KEY"

)

response = portkey.chat.completions.create(

model="your-self-hosted-model-name",

messages=[

{"role": "system", "content": "You are a helpful assistant."},

{"role": "user", "content": "Hello!"}

]

print(response)

)

LocalAI Endpoints Supported

| Endpoint | Resource |

|---|

| /chat/completions (Chat, Vision, Tools support) | Doc |

| /images/generations | Doc |

| /embeddings | Doc |

| /audio/transcriptions | Doc |

Next Steps

Explore the complete list of features supported in the SDK:

You’ll find more information in the relevant sections:

- Add metadata to your requests

- Add gateway configs to your Ollama requests

- Tracing Ollama requests

- Setup a fallback from OpenAI to Ollama APIs