Introduction

PydanticAI is a Python agent framework designed to make it less painful to build production-grade applications with Generative AI. It brings the same ergonomic design and developer experience to GenAI that FastAPI brought to web development. Portkey enhances PydanticAI with production-readiness features, turning your experimental agents into robust systems by providing:- Complete observability of every agent step, tool use, and interaction

- Built-in reliability with fallbacks, retries, and load balancing

- Cost tracking and optimization to manage your AI spend

- Access to 1600+ LLMs through a single integration

- Guardrails to keep agent behavior safe and compliant

- Version-controlled prompts for consistent agent performance

PydanticAI Official Documentation

Learn more about PydanticAI’s core concepts and features

Installation & Setup

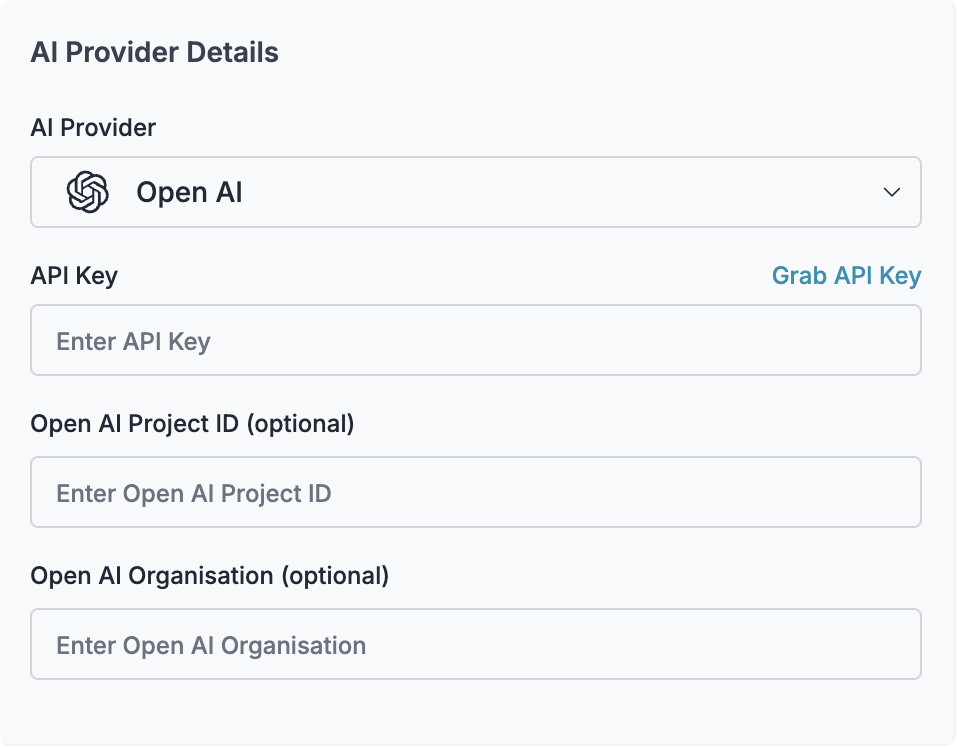

Generate API Key

Create a Portkey API key with optional budget/rate limits from the Portkey dashboard. You can attach configurations for reliability, caching, and more to this key.

Configure Portkey Client

For a simple setup, first configure the Portkey client that will be used with PydanticAI:

What are Virtual Keys? Virtual keys in Portkey securely store your LLM provider API keys (OpenAI, Anthropic, etc.) in an encrypted vault. They allow for easier key rotation and budget management. Learn more about virtual keys here.

Basic Agent Implementation

Let’s create a simple structured output agent with PydanticAI and Portkey. This agent will respond to a query about Formula 1 and return structured data:F1GrandPrix object with all fields properly typed and validated:

Advanced Features

Working with Images

PydanticAI supports multimodal inputs including images. Here’s how to use Portkey with a vision model:Tools and Tool Calls

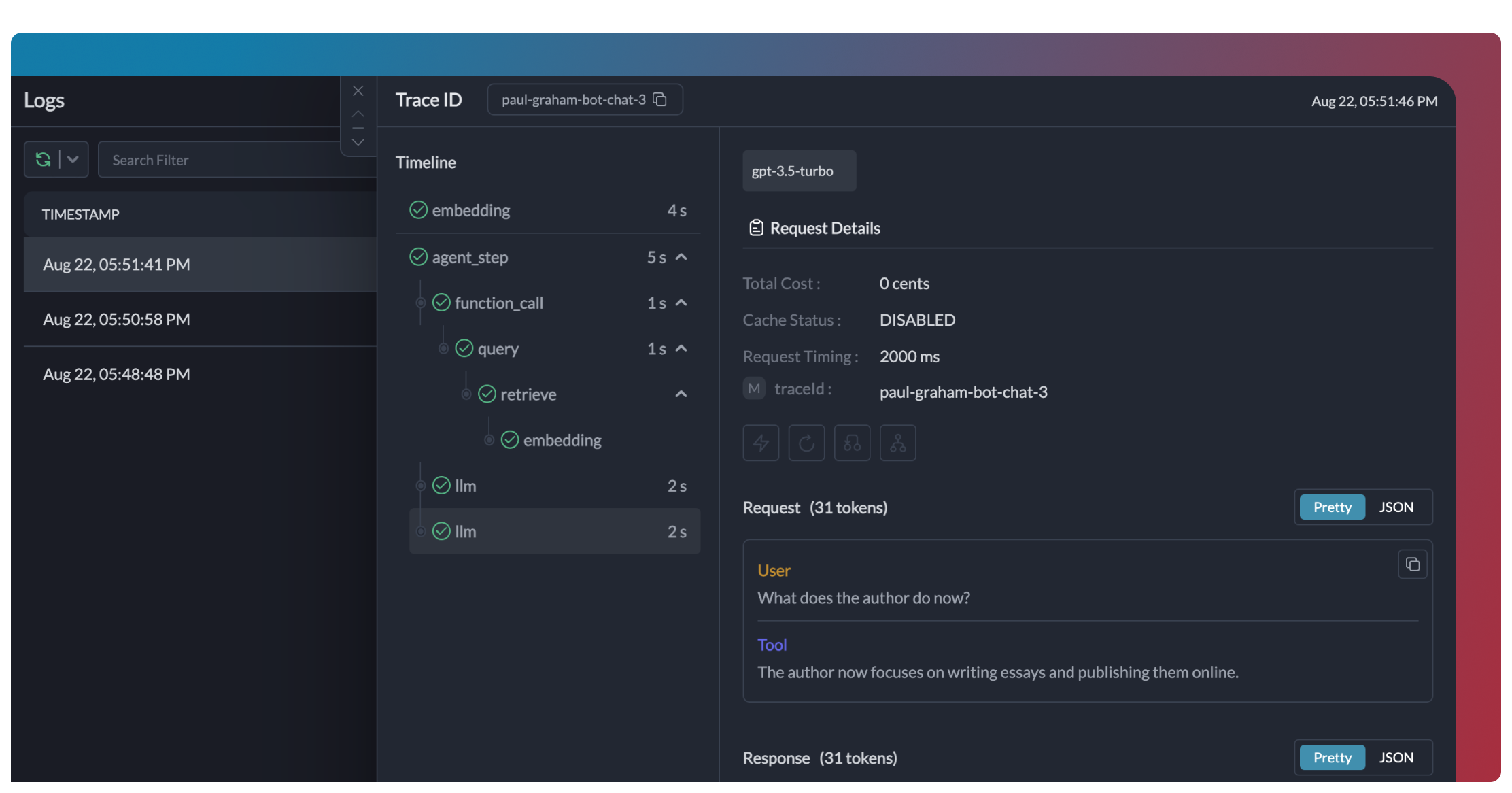

PydanticAI provides a powerful tools system that integrates seamlessly with Portkey. Here’s how to create an agent with tools:Portkey logs each tool call separately, allowing you to analyze the full execution path of your agent, including both LLM calls and tool invocations.

Multi-agent Applications

PydanticAI excels at creating multi-agent systems where agents can call each other. Here’s how to integrate Portkey with a multi-agent setup: This multi-agent system uses three specialized agents:search_agent - Orchestrates the flow and validates flight selections

extraction_agent - Extracts structured flight data from raw text

seat_preference_agent - Interprets user’s seat preferences

With Portkey integration, you get:

- Unified tracing across all three agents

- Token and cost tracking for the entire workflow

- Ability to set usage limits across the entire system

- Observability of both AI and human interaction points

Production Features

1. Enhanced Observability

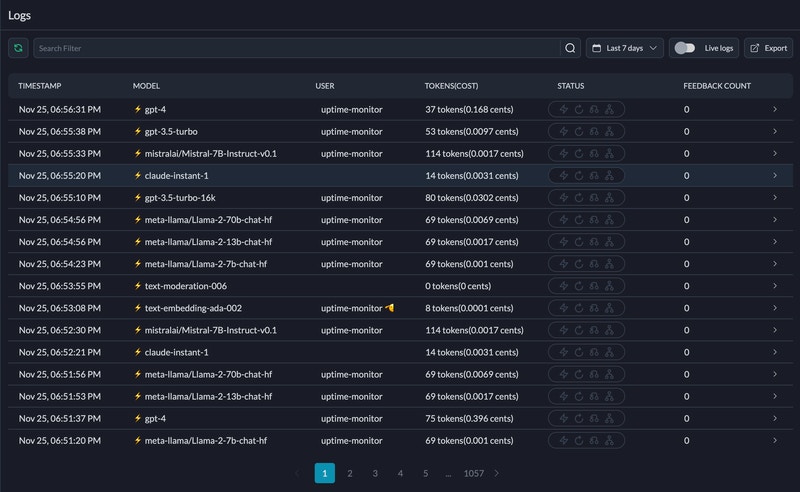

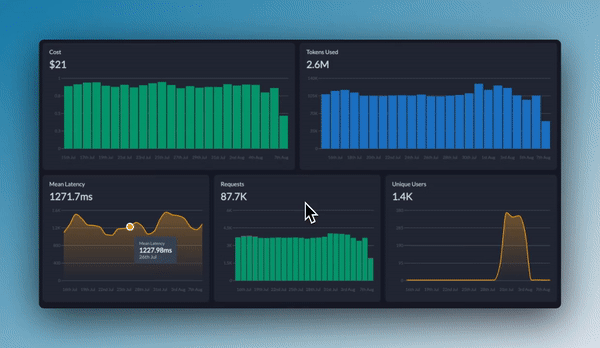

Portkey provides comprehensive observability for your PydanticAI agents, helping you understand exactly what’s happening during each execution.- Traces

- Logs

- Metrics & Dashboards

- Metadata Filtering

2. Reliability - Keep Your Agents Running Smoothly

When running agents in production, things can go wrong - API rate limits, network issues, or provider outages. Portkey’s reliability features ensure your agents keep running smoothly even when problems occur. It’s simple to enable fallback in your PydanticAI agents by using a Portkey Config:Automatic Retries

Handles temporary failures automatically. If an LLM call fails, Portkey will retry the same request for the specified number of times - perfect for rate limits or network blips.

Request Timeouts

Prevent your agents from hanging. Set timeouts to ensure you get responses (or can fail gracefully) within your required timeframes.

Conditional Routing

Send different requests to different providers. Route complex reasoning to GPT-4, creative tasks to Claude, and quick responses to Gemini based on your needs.

Fallbacks

Keep running even if your primary provider fails. Automatically switch to backup providers to maintain availability.

Load Balancing

Spread requests across multiple API keys or providers. Great for high-volume agent operations and staying within rate limits.

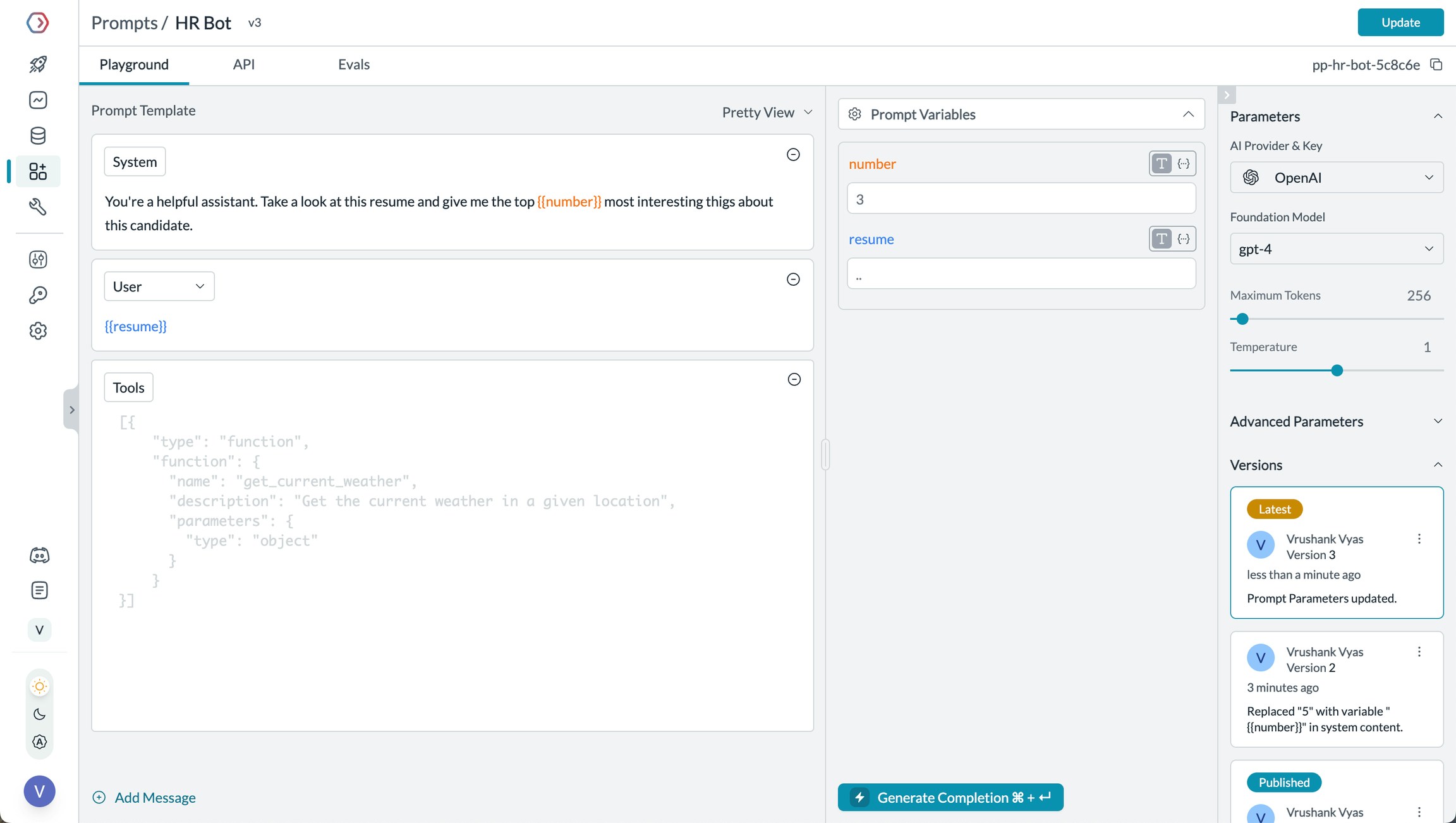

3. Prompting in PydanticAI

Portkey’s Prompt Engineering Studio helps you create, manage, and optimize the prompts used in your PydanticAI agents. Instead of hardcoding prompts or instructions, use Portkey’s prompt rendering API to dynamically fetch and apply your versioned prompts.

- Prompt Playground

- Using Prompt Templates

- Prompt Versioning

- Mustache Templating for variables

Prompt Playground is a place to compare, test and deploy perfect prompts for your AI application. It’s where you experiment with different models, test variables, compare outputs, and refine your prompt engineering strategy before deploying to production. It allows you to:

- Iteratively develop prompts before using them in your agents

- Test prompts with different variables and models

- Compare outputs between different prompt versions

- Collaborate with team members on prompt development

Prompt Engineering Studio

Learn more about Portkey’s prompt management features

4. Guardrails for Safe Agents

Guardrails ensure your PydanticAI agents operate safely and respond appropriately in all situations. Why Use Guardrails? PydanticAI agents can experience various failure modes:- Generating harmful or inappropriate content

- Leaking sensitive information like PII

- Hallucinating incorrect information

- Generating outputs in incorrect formats

- Detect and redact PII in both inputs and outputs

- Filter harmful or inappropriate content

- Validate response formats against schemas

- Check for hallucinations against ground truth

- Apply custom business logic and rules

Learn More About Guardrails

Explore Portkey’s guardrail features to enhance agent safety

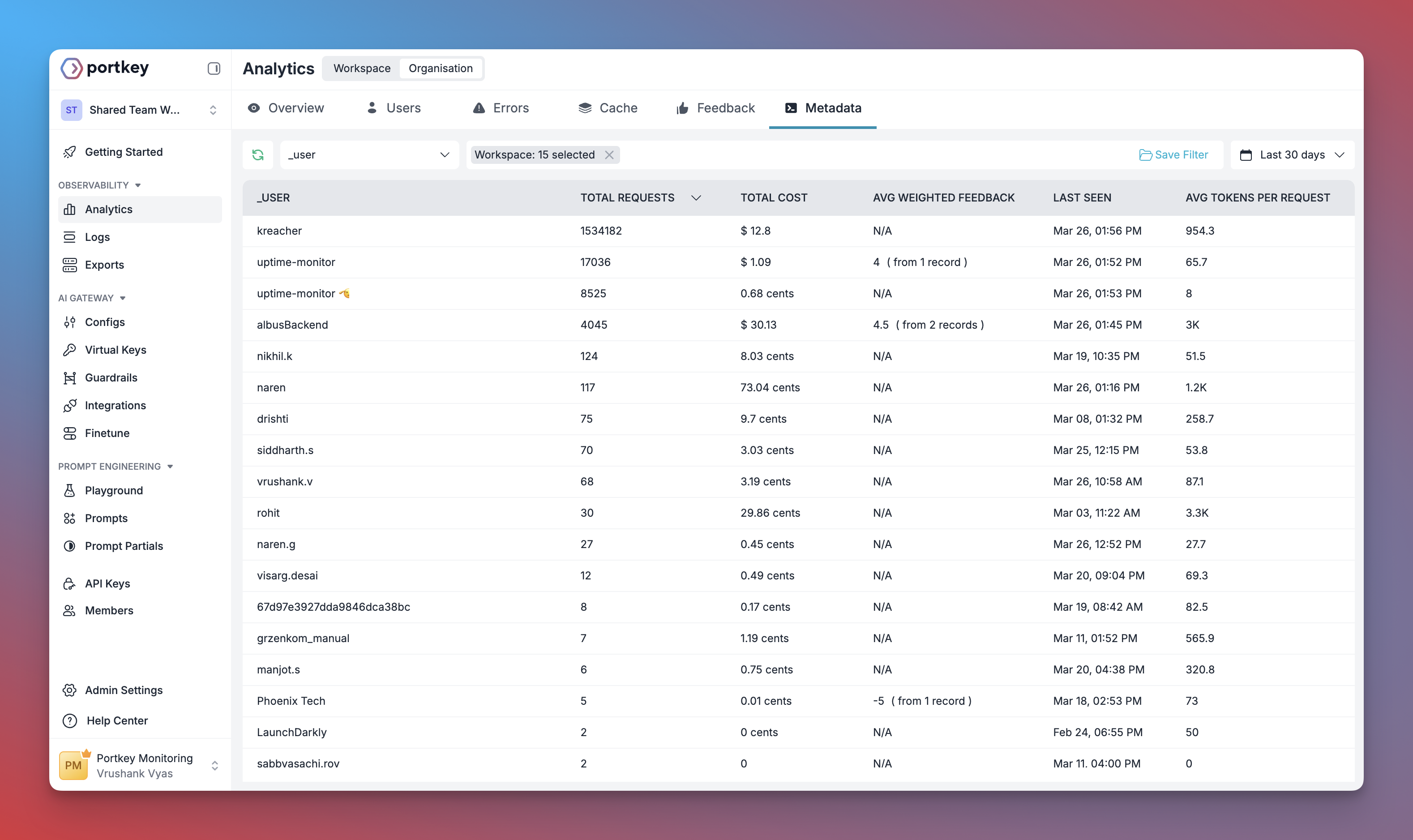

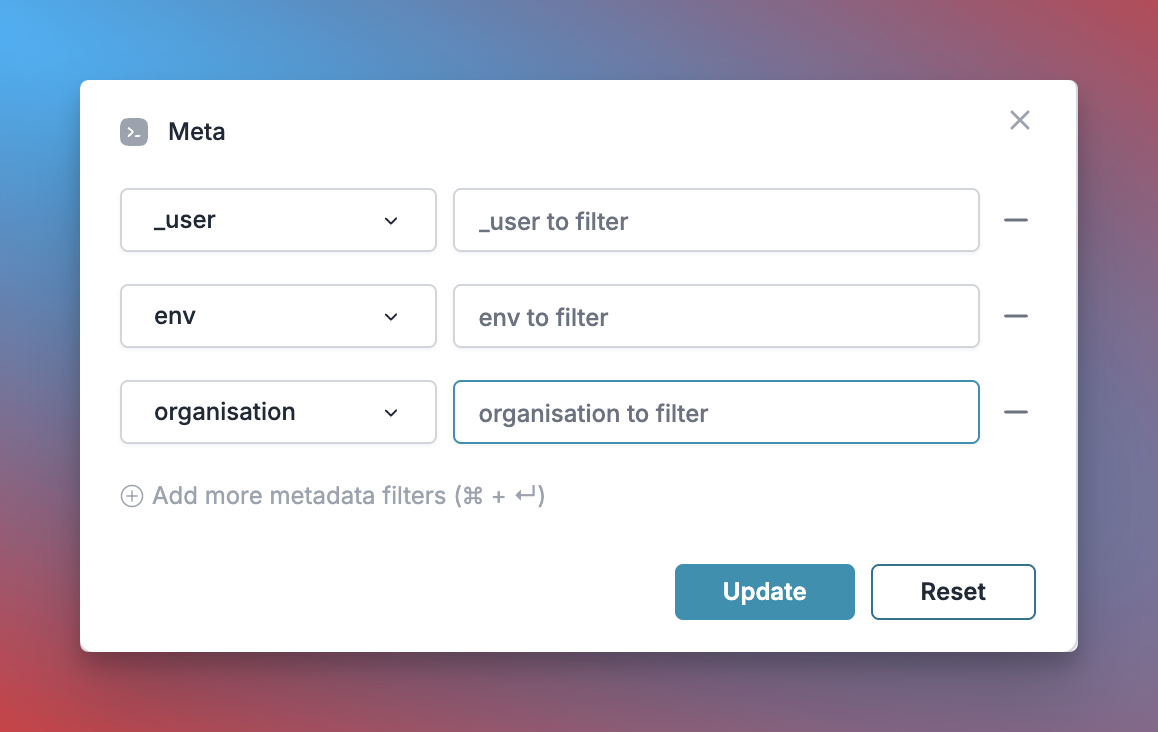

5. User Tracking with Metadata

Track individual users through your PydanticAI agents using Portkey’s metadata system. What is Metadata in Portkey? Metadata allows you to associate custom data with each request, enabling filtering, segmentation, and analytics. The special_user field is specifically designed for user tracking.

- Per-user cost tracking and budgeting

- Personalized user analytics

- Team or organization-level metrics

- Environment-specific monitoring (staging vs. production)

Learn More About Metadata

Explore how to use custom metadata to enhance your analytics

6. Caching for Efficient Agents

Implement caching to make your PydanticAI agents more efficient and cost-effective:- Simple Caching

- Semantic Caching

7. Model Interoperability

PydanticAI supports multiple LLM providers, and Portkey extends this capability by providing access to over 200 LLMs through a unified interface. You can easily switch between different models without changing your core agent logic:- OpenAI (GPT-4o, GPT-4 Turbo, etc.)

- Anthropic (Claude 3.5 Sonnet, Claude 3 Opus, etc.)

- Mistral AI (Mistral Large, Mistral Medium, etc.)

- Google Vertex AI (Gemini 1.5 Pro, etc.)

- Cohere (Command, Command-R, etc.)

- AWS Bedrock (Claude, Titan, etc.)

- Local/Private Models

Supported Providers

See the full list of LLM providers supported by Portkey

Set Up Enterprise Governance for PydanticAI

Why Enterprise Governance? If you are using PydanticAI inside your organization, you need to consider several governance aspects:- Cost Management: Controlling and tracking AI spending across teams

- Access Control: Managing which teams can use specific models

- Usage Analytics: Understanding how AI is being used across the organization

- Security & Compliance: Maintaining enterprise security standards

- Reliability: Ensuring consistent service across all users

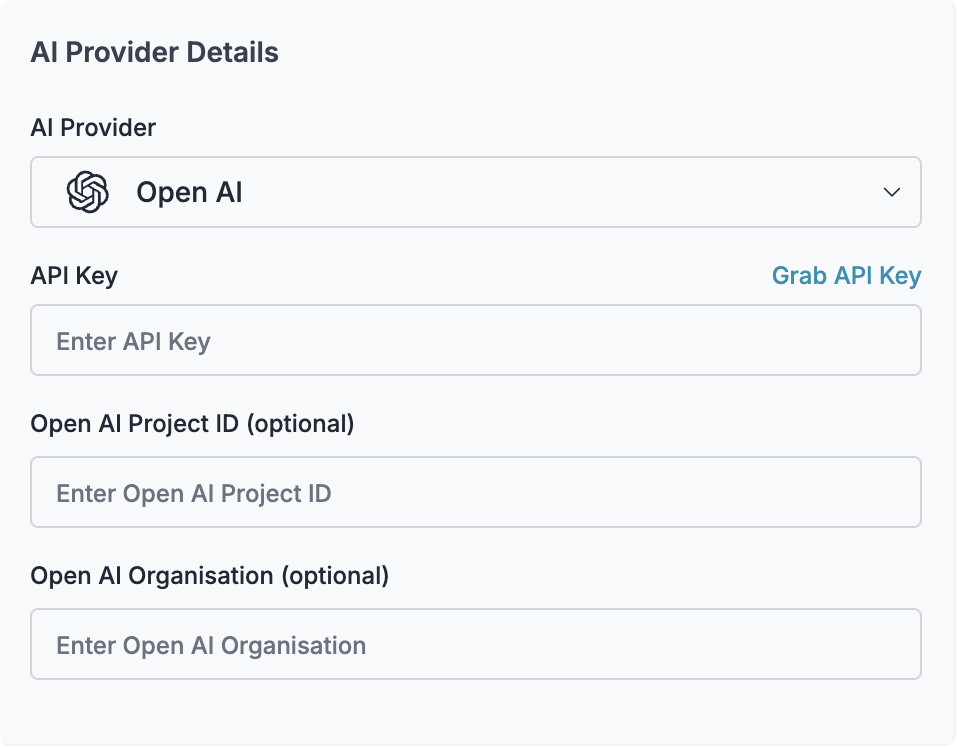

Create Virtual Key

Virtual Keys are Portkey’s secure way to manage your LLM provider API keys. They provide essential controls like:

- Budget limits for API usage

- Rate limiting capabilities

- Secure API key storage

Save your virtual key ID - you’ll need it for the next step.

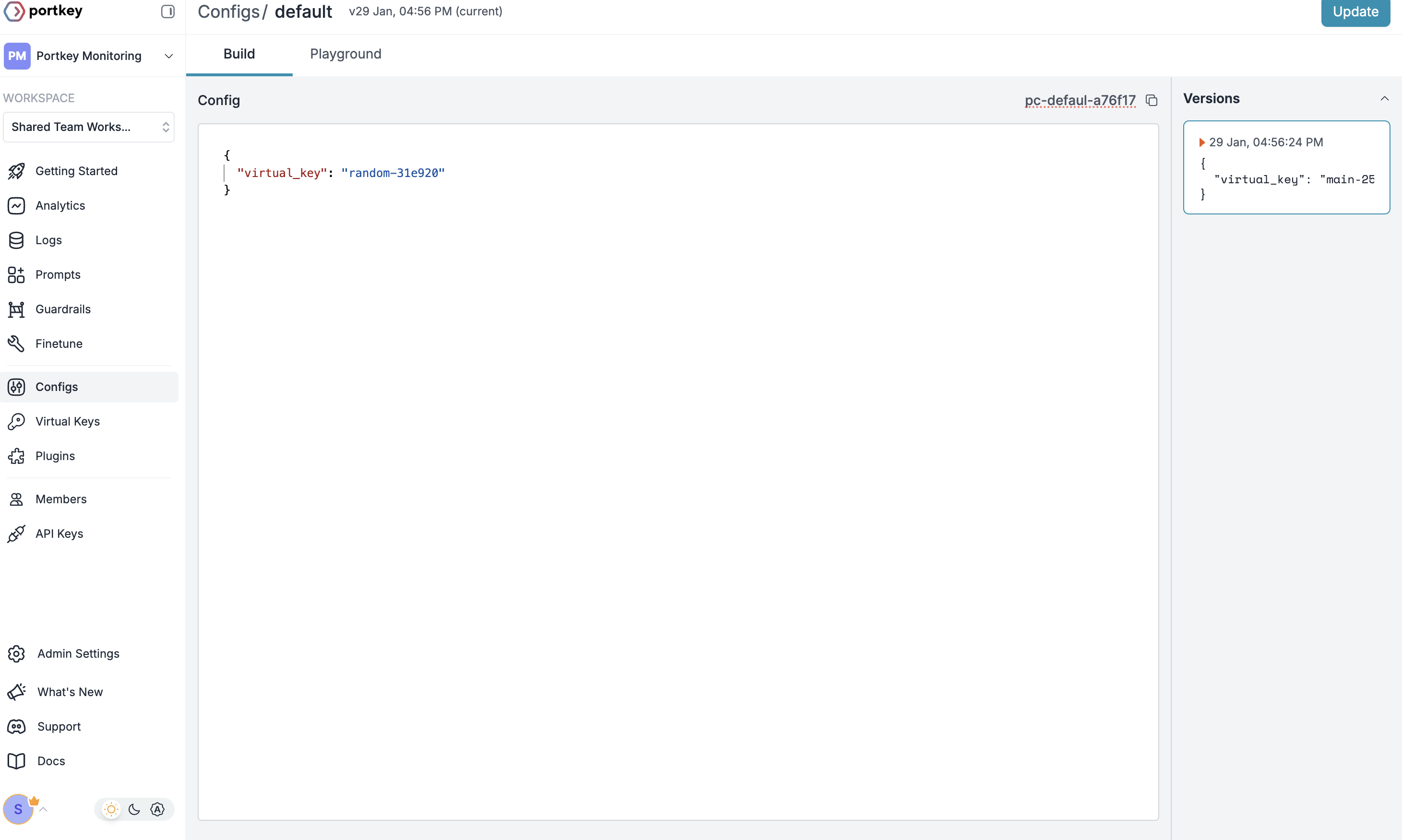

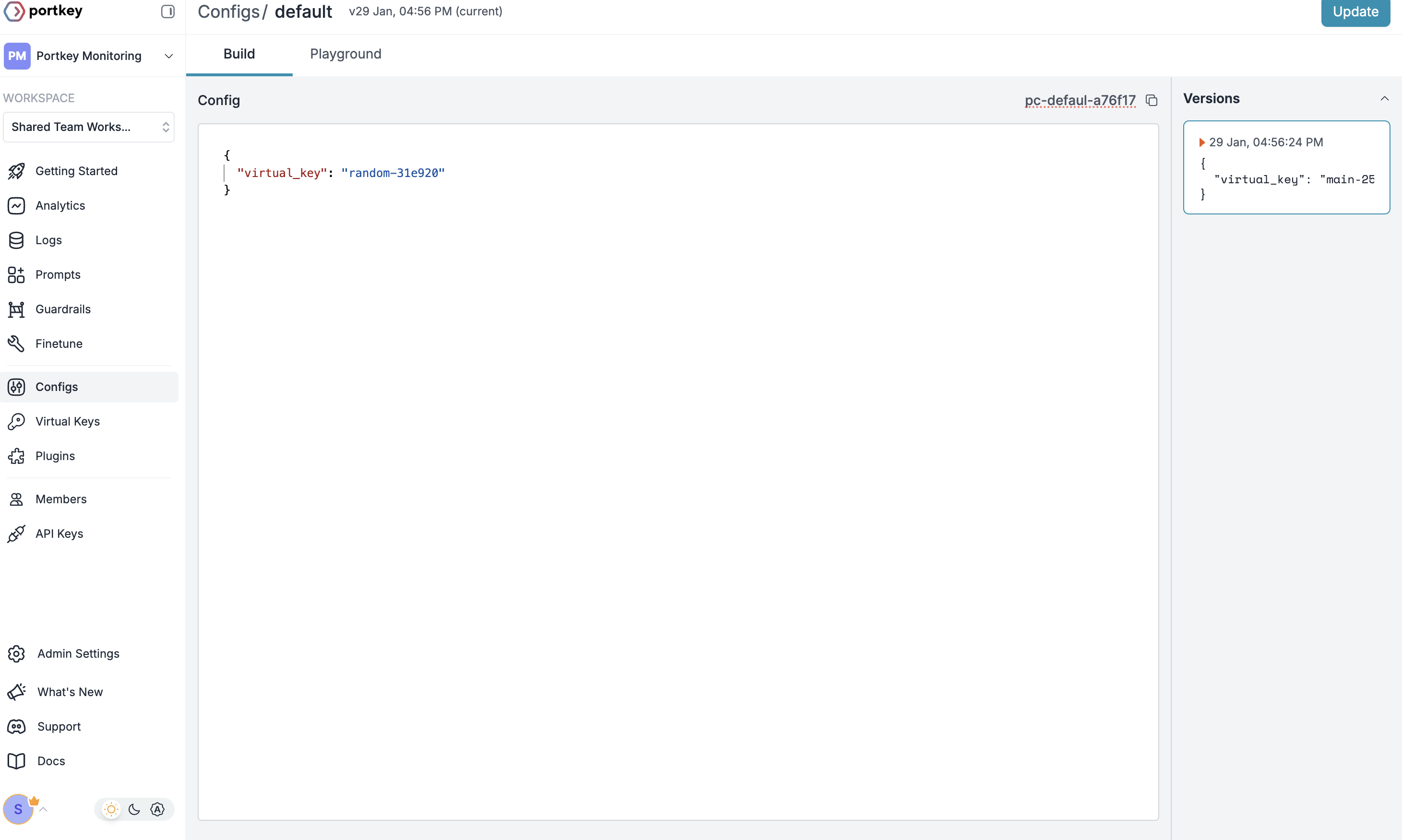

Create Default Config

Configs in Portkey define how your requests are routed, with features like advanced routing, fallbacks, and retries.To create your config:

- Go to Configs in Portkey dashboard

- Create new config with:

- Save and note the Config name for the next step

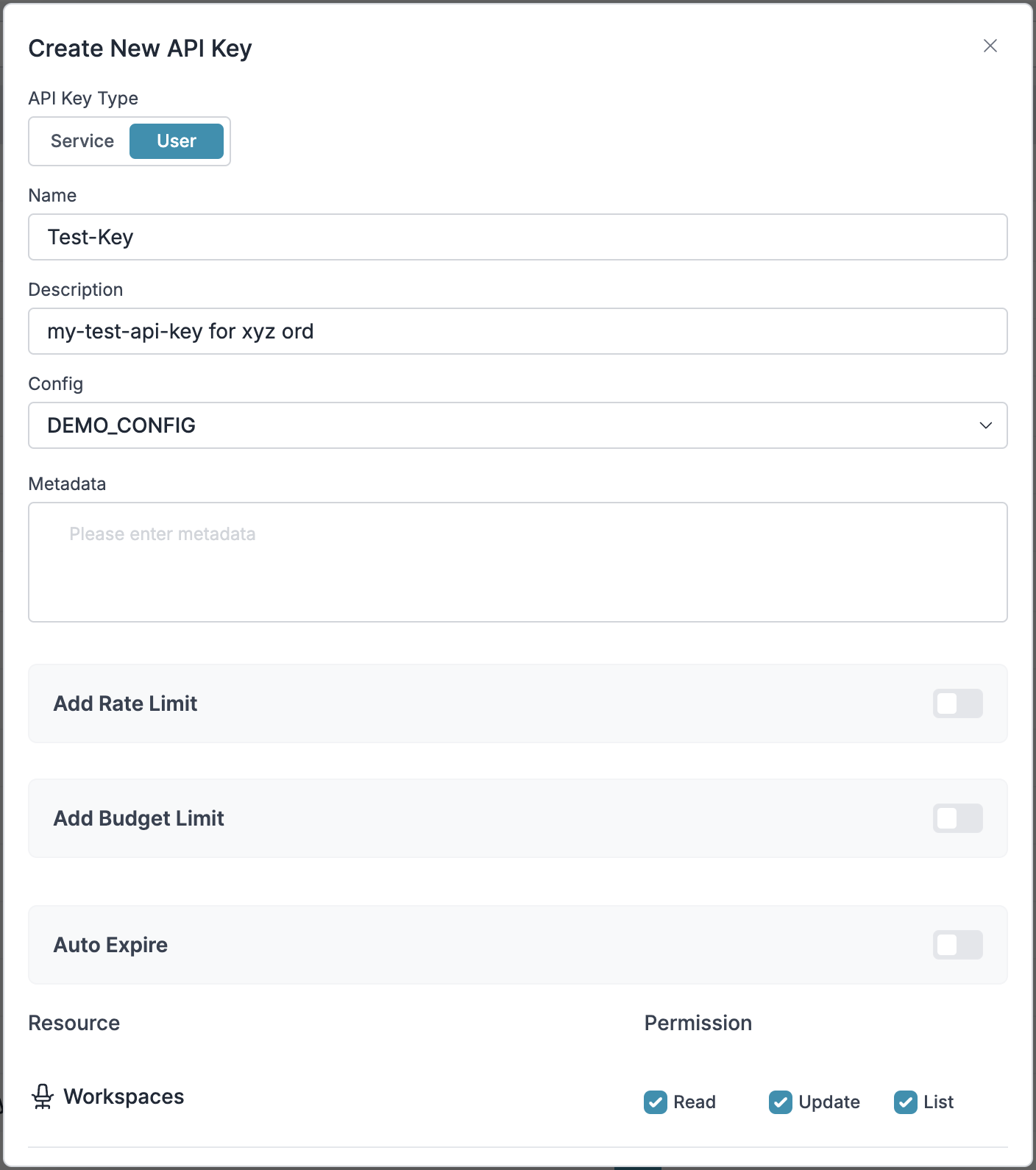

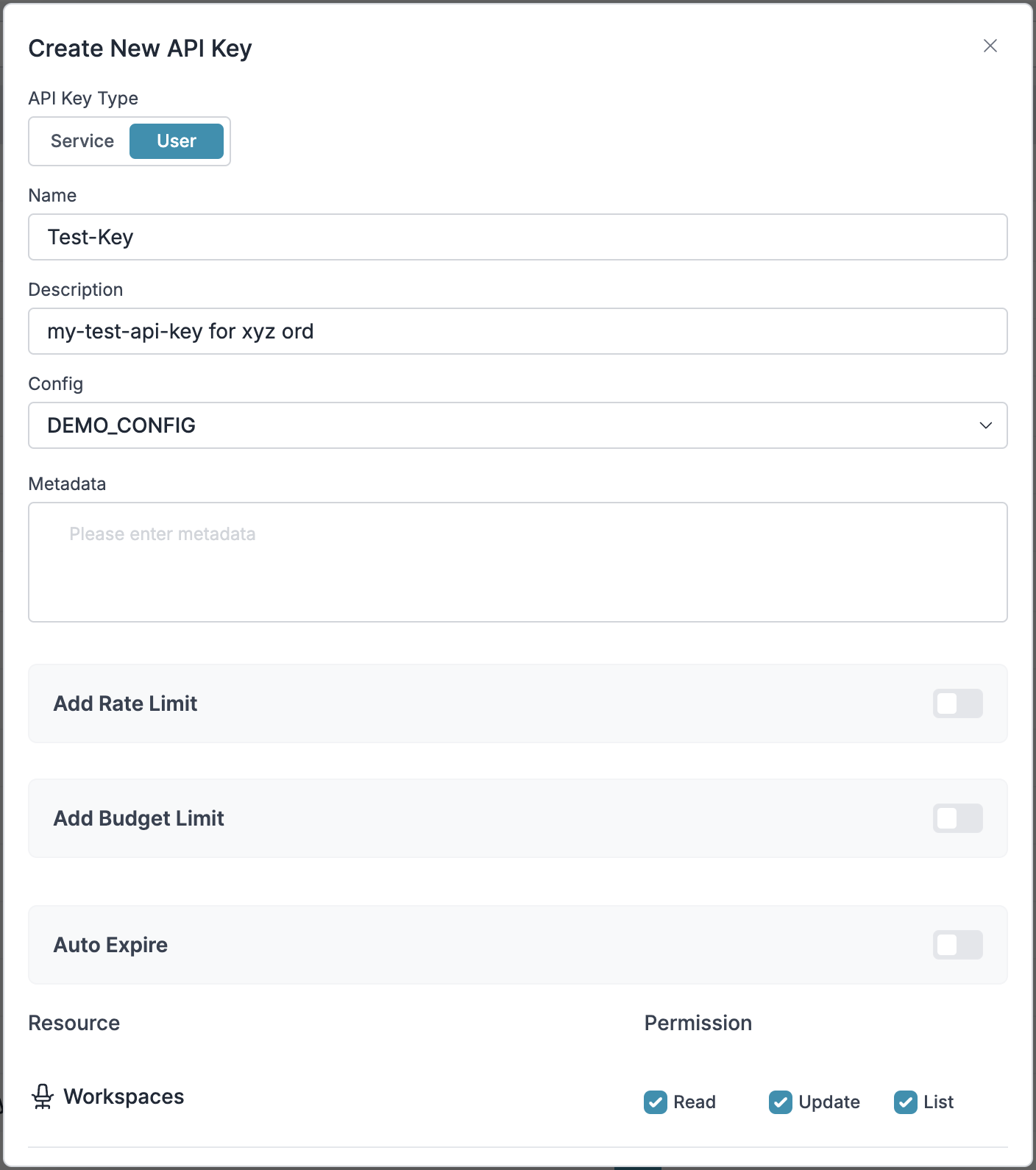

Configure Portkey API Key

Now create a Portkey API key and attach the config you created in Step 2:

- Go to API Keys in Portkey and Create new API key

- Select your config from

Step 2 - Generate and save your API key

Step 1: Implement Budget Controls & Rate Limits

Step 1: Implement Budget Controls & Rate Limits

Step 1: Implement Budget Controls & Rate Limits

Virtual Keys enable granular control over LLM access at the team/department level. This helps you:- Set up budget limits

- Prevent unexpected usage spikes using Rate limits

- Track departmental spending

Setting Up Department-Specific Controls:

- Navigate to Virtual Keys in Portkey dashboard

- Create new Virtual Key for each department with budget limits and rate limits

- Configure department-specific limits

Step 2: Define Model Access Rules

Step 2: Define Model Access Rules

Step 2: Define Model Access Rules

As your AI usage scales, controlling which teams can access specific models becomes crucial. Portkey Configs provide this control layer with features like:Access Control Features:

- Model Restrictions: Limit access to specific models

- Data Protection: Implement guardrails for sensitive data

- Reliability Controls: Add fallbacks and retry logic

Example Configuration:

Here’s a basic configuration to route requests to OpenAI, specifically using GPT-4o:Configs can be updated anytime to adjust controls without affecting running applications.

Step 3: Implement Access Controls

Step 3: Implement Access Controls

Step 3: Implement Access Controls

Create User-specific API keys that automatically:- Track usage per user/team with the help of virtual keys

- Apply appropriate configs to route requests

- Collect relevant metadata to filter logs

- Enforce access permissions

Step 4: Deploy & Monitor

Step 4: Deploy & Monitor

Step 4: Deploy & Monitor

After distributing API keys to your team members, your enterprise-ready PydanticAI setup is ready to go. Each team member can now use their designated API keys with appropriate access levels and budget controls.Monitor usage in Portkey dashboard:- Cost tracking by department

- Model usage patterns

- Request volumes

- Error rates

Enterprise Features Now Available

Your PydanticAI integration now has:- Departmental budget controls

- Model access governance

- Usage tracking & attribution

- Security guardrails

- Reliability features

Frequently Asked Questions

How does Portkey enhance PydanticAI?

How does Portkey enhance PydanticAI?

Portkey adds production-readiness to PydanticAI through comprehensive observability (traces, logs, metrics), reliability features (fallbacks, retries, caching), and access to 1600+ LLMs through a unified interface. This makes it easier to debug, optimize, and scale your agent applications, all while preserving PydanticAI’s strong type safety.

Can I use Portkey with existing PydanticAI applications?

Can I use Portkey with existing PydanticAI applications?

Yes! Portkey integrates seamlessly with existing PydanticAI applications. You just need to replace your client initialization code with the Portkey-enabled version. The rest of your agent code remains unchanged and continues to benefit from PydanticAI’s strong typing.

Does Portkey work with all PydanticAI features?

Does Portkey work with all PydanticAI features?

Portkey supports all PydanticAI features, including structured outputs, tool use, multi-agent systems, and more. It adds observability and reliability without limiting any of the framework’s functionality.

Can I track usage across multiple agents in a workflow?

Can I track usage across multiple agents in a workflow?

Yes, Portkey allows you to use a consistent

trace_id across multiple agents and requests to track the entire workflow. This is especially useful for multi-agent systems where you want to understand the full execution path.How do I filter logs and traces for specific agent runs?

How do I filter logs and traces for specific agent runs?

Portkey allows you to add custom metadata to your agent runs, which you can then use for filtering. Add fields like

agent_name, agent_type, or session_id to easily find and analyze specific agent executions.Can I use my own API keys with Portkey?

Can I use my own API keys with Portkey?

Yes! Portkey uses your own API keys for the various LLM providers. It securely stores them as virtual keys, allowing you to easily manage and rotate keys without changing your code.