Realtime API support is coming soon! Join our Discord community to be the first to know when LiveKit’s realtime model integration with Portkey is available.

- Unified AI Gateway - Single interface for 250+ LLMs with API key management

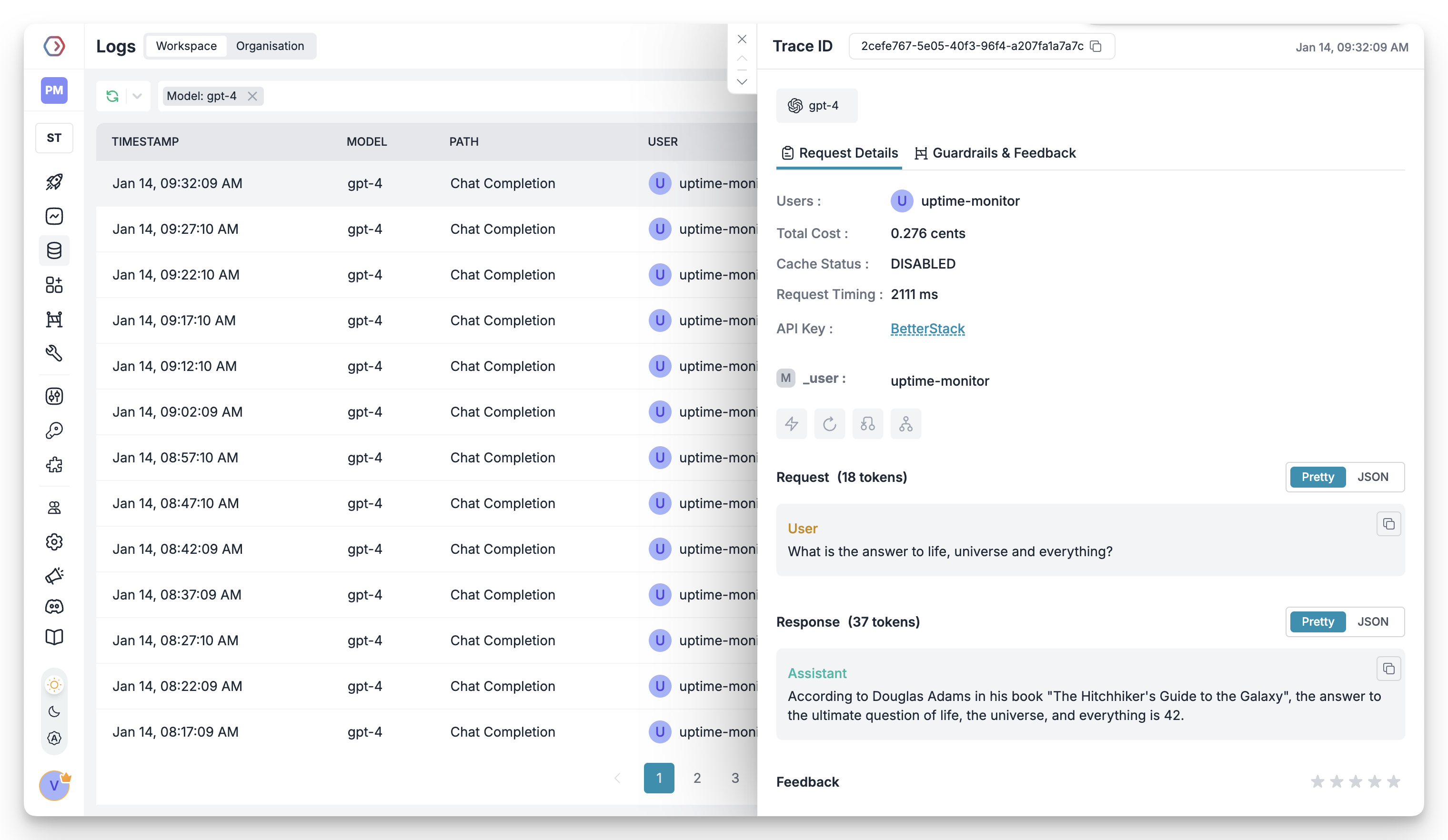

- Centralized AI observability: Real-time usage tracking for 40+ key metrics and logs for every request

- Governance - Real-time spend tracking, set budget limits and RBAC in your LiveKit agents

- Security Guardrails - PII detection, content filtering, and compliance controls

If you are an enterprise looking to deploy LiveKit agents in production, check out this section.

1. Setting up Portkey

Portkey allows you to use 250+ LLMs with your LiveKit agents, with minimal configuration required. Let’s set up the core components in Portkey that you’ll need for integration.Create Virtual Key

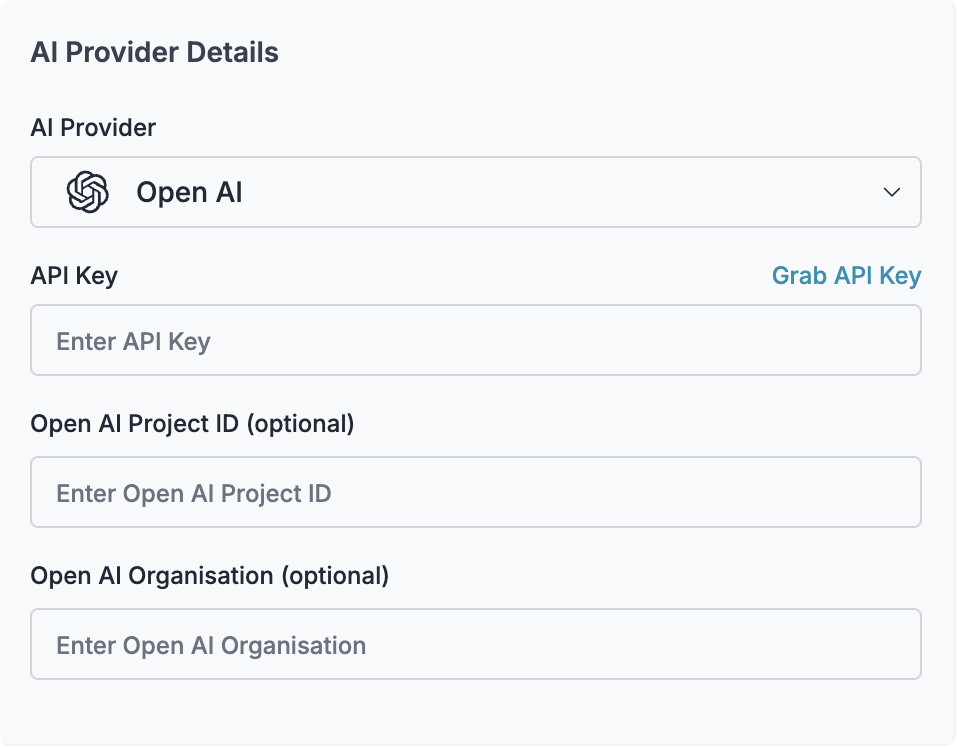

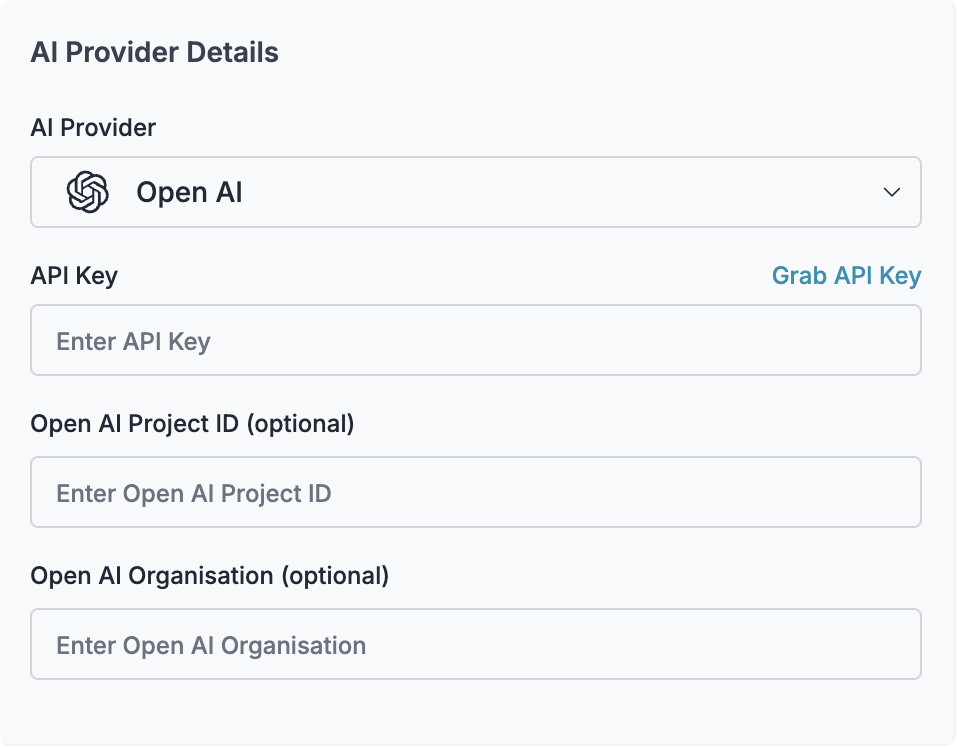

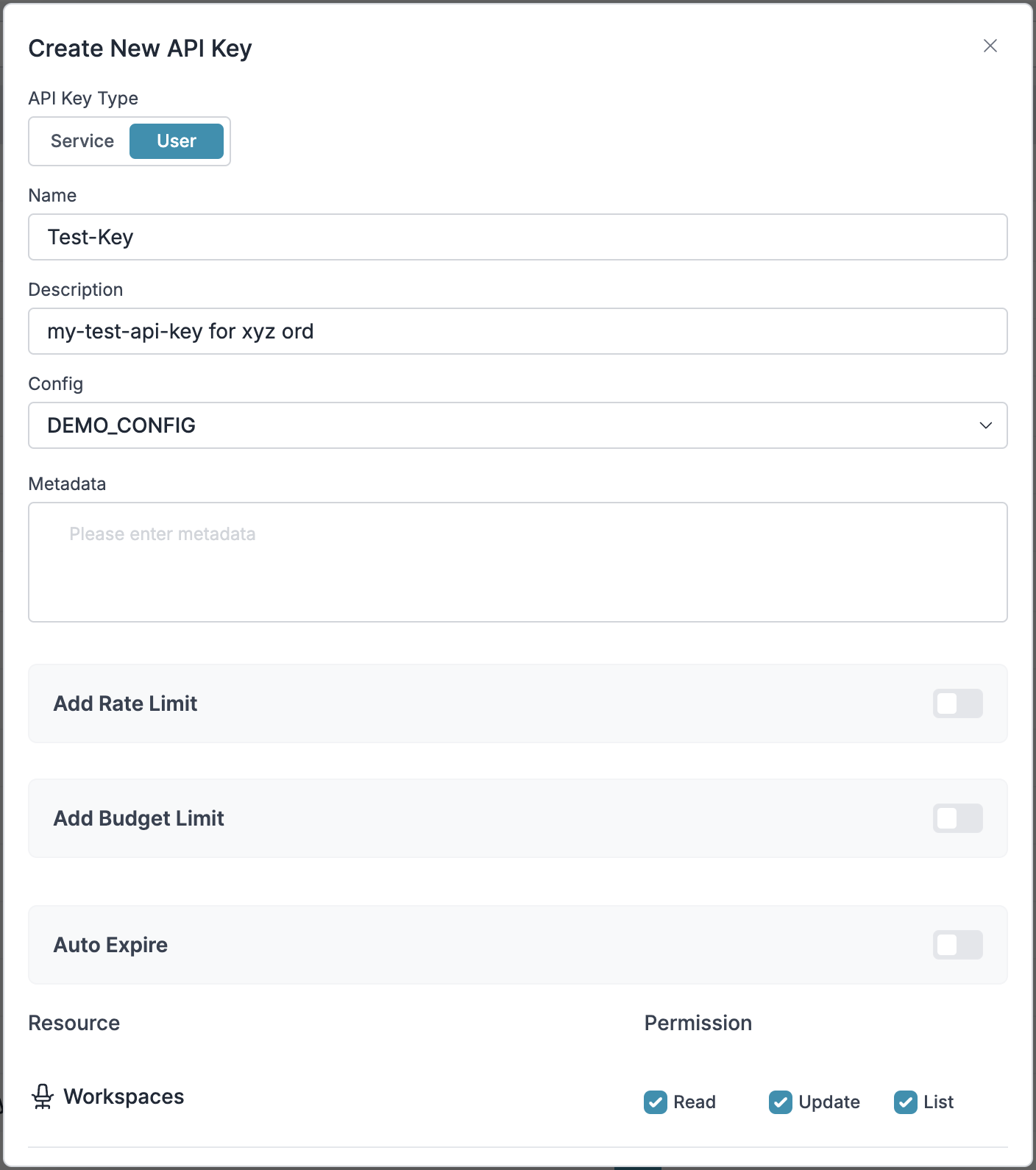

Virtual Keys are Portkey’s secure way to manage your LLM provider API keys. For LiveKit integration, you’ll need to create a virtual key for OpenAI (or any other LLM provider you prefer).To create a virtual key:

- Go to Virtual Keys in the Portkey App

- Click “Add Virtual Key” and select OpenAI as the provider

- Add your OpenAI API key

- Save and copy the virtual key ID

Save your virtual key ID - you’ll need it for the next step.

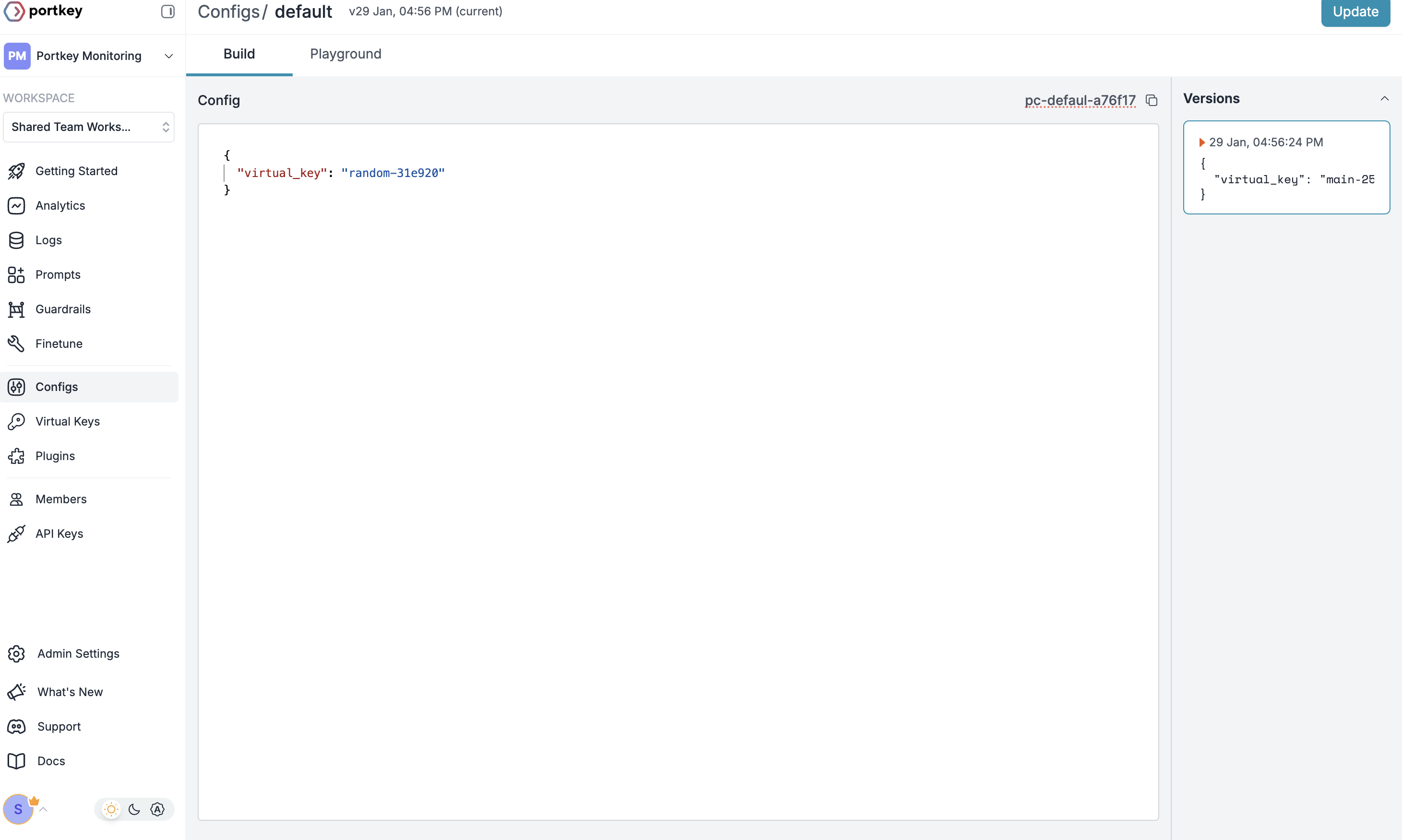

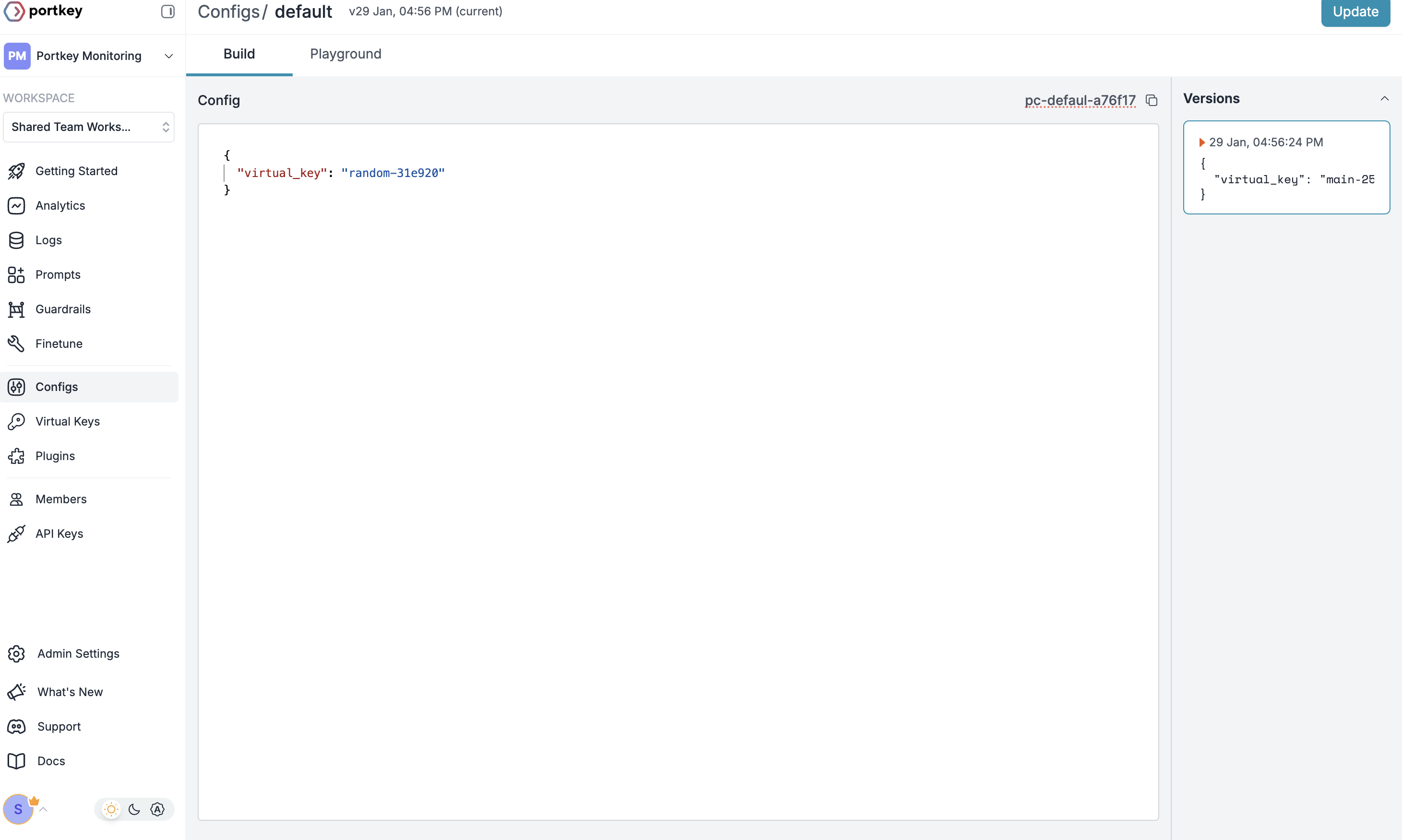

Create Default Config

Configs in Portkey define how your requests are routed and can enable features like fallbacks, caching, and more.To create your config:

- Go to Configs in Portkey dashboard

- Create new config with:

- Save and note the Config ID for the next step

This basic config connects to your virtual key. You can add advanced features like caching, fallbacks, and guardrails later.

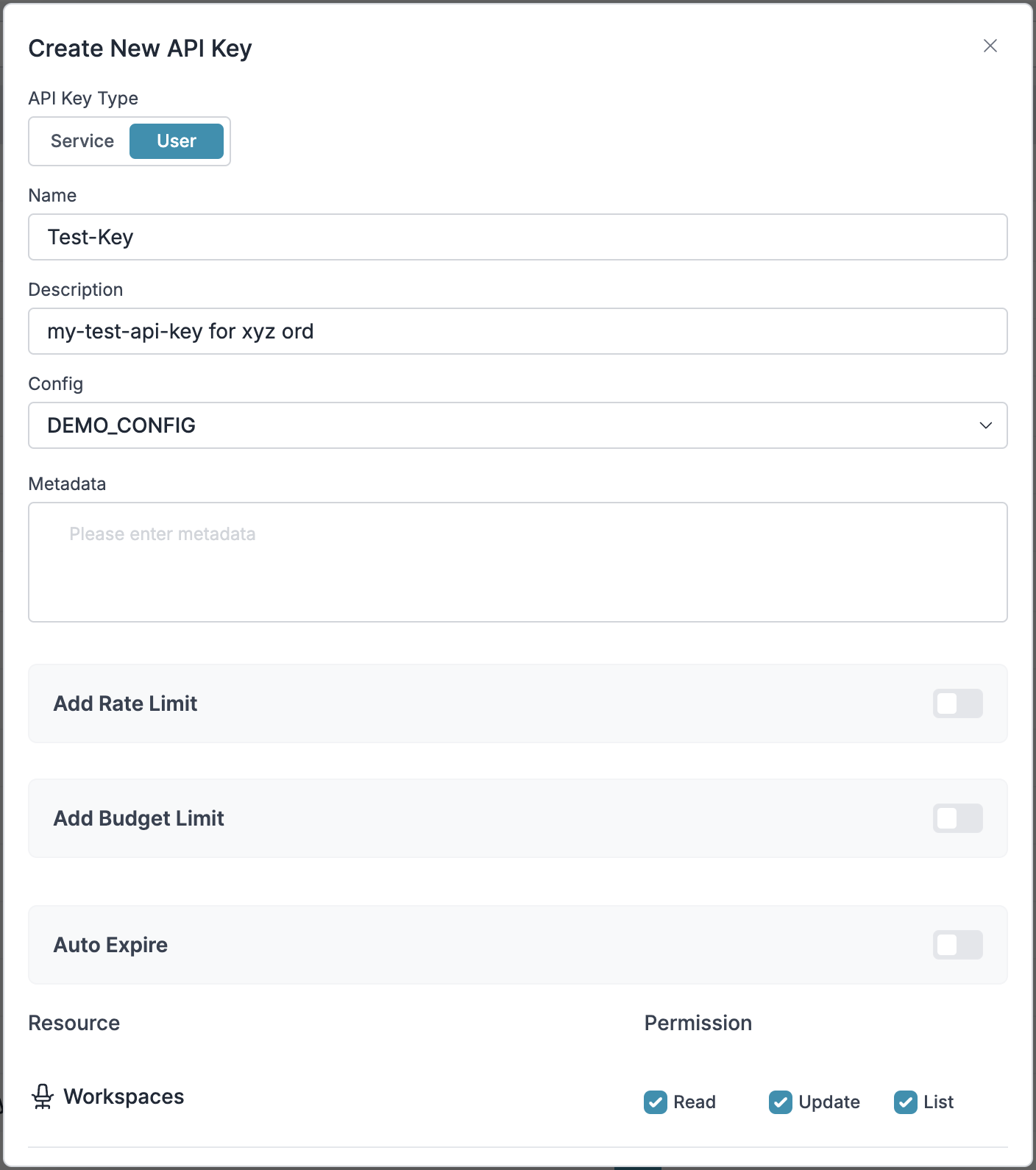

Configure Portkey API Key

Now create a Portkey API key and attach the config you created:

- Go to API Keys in Portkey

- Create new API key

- Select your config from Step 2

- Generate and save your API key

Save your API key securely - you’ll need it for LiveKit integration.

2. Integrate Portkey with LiveKit

Now that you have your Portkey components set up, let’s integrate them with LiveKit agents.Installation

Install the required packages:Configuration

End-to-End Example using Portkey and LiveKit

Build a simple voice assistant with Python in less than 10 minutes.3. Set Up Enterprise Governance for Livekit

Why Enterprise Governance? If you are using Livekit inside your orgnaization, you need to consider several governance aspects:- Cost Management: Controlling and tracking AI spending across teams

- Access Control: Managing which teams can use specific models

- Usage Analytics: Understanding how AI is being used across the organization

- Security & Compliance: Maintaining enterprise security standards

- Reliability: Ensuring consistent service across all users

Step 1: Implement Budget Controls & Rate Limits

Step 1: Implement Budget Controls & Rate Limits

Step 1: Implement Budget Controls & Rate Limits

Virtual Keys enable granular control over LLM access at the team/department level. This helps you:- Set up budget limits

- Prevent unexpected usage spikes using Rate limits

- Track departmental spending

Setting Up Department-Specific Controls:

- Navigate to Virtual Keys in Portkey dashboard

- Create new Virtual Key for each department with budget limits and rate limits

- Configure department-specific limits

Step 2: Define Model Access Rules

Step 2: Define Model Access Rules

Step 2: Define Model Access Rules

As your AI usage scales, controlling which teams can access specific models becomes crucial. Portkey Configs provide this control layer with features like:Access Control Features:

- Model Restrictions: Limit access to specific models

- Data Protection: Implement guardrails for sensitive data

- Reliability Controls: Add fallbacks and retry logic

Example Configuration:

Here’s a basic configuration to route requests to OpenAI, specifically using GPT-4o:Configs can be updated anytime to adjust controls without affecting running applications.

Step 3: Implement Access Controls

Step 3: Implement Access Controls

Step 3: Implement Access Controls

Create User-specific API keys that automatically:- Track usage per user/team with the help of virtual keys

- Apply appropriate configs to route requests

- Collect relevant metadata to filter logs

- Enforce access permissions

Step 4: Deploy & Monitor

Step 4: Deploy & Monitor

Step 4: Deploy & Monitor

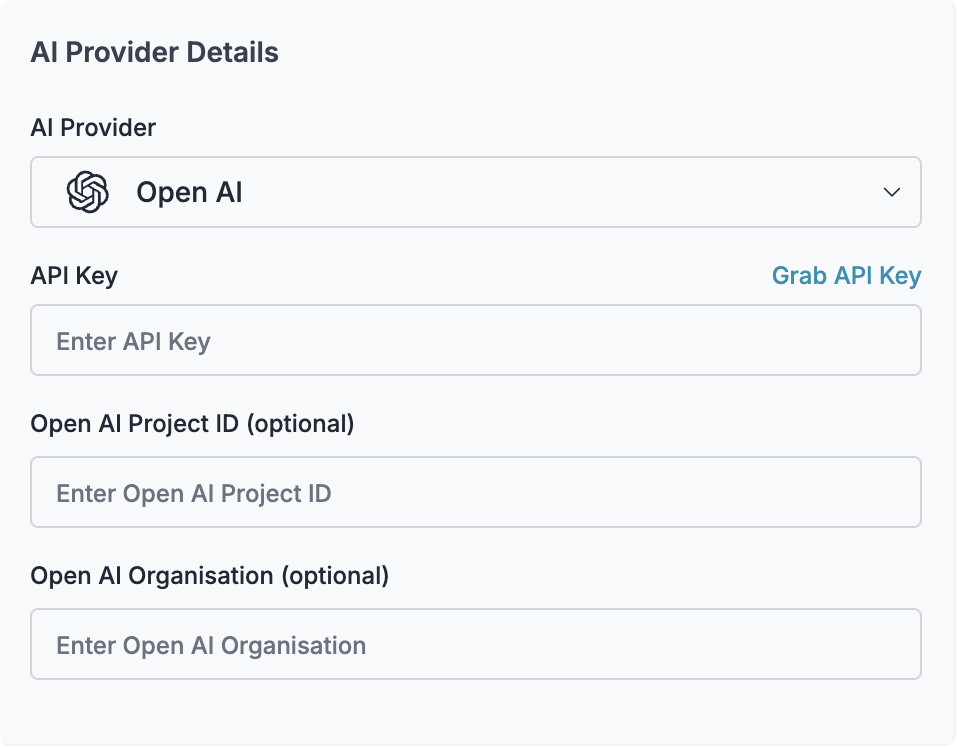

After distributing API keys to your team members, your enterprise-ready Livekit setup is ready to go. Each team member can now use their designated API keys with appropriate access levels and budget controls. Apply your governance setup using the integration steps from earlier sections Monitor usage in Portkey dashboard:- Cost tracking by department

- Model usage patterns

- Request volumes

- Error rates

Enterprise Features Now Available

Livekit now has:- Departmental budget controls

- Model access governance

- Usage tracking & attribution

- Security guardrails

- Reliability features

Portkey Features

Now that you have enterprise-grade Livekit setup, let’s explore the comprehensive features Portkey provides to ensure secure, efficient, and cost-effective AI operations.1. Comprehensive Metrics

Using Portkey you can track 40+ key metrics including cost, token usage, response time, and performance across all your LLM providers in real time. You can also filter these metrics based on custom metadata that you can set in your configs. Learn more about metadata here.

2. Advanced Logs

Portkey’s logging dashboard provides detailed logs for every request made to your LLMs. These logs include:- Complete request and response tracking

- Metadata tags for filtering

- Cost attribution and much more…

3. Unified Access to 1600+ LLMs

You can easily switch between 1600+ LLMs. Call various LLMs such as Anthropic, Gemini, Mistral, Azure OpenAI, Google Vertex AI, AWS Bedrock, and many more by simply changing thevirtual key in your default config object.

4. Advanced Metadata Tracking

Using Portkey, you can add custom metadata to your LLM requests for detailed tracking and analytics. Use metadata tags to filter logs, track usage, and attribute costs across departments and teams.Custom Metata

5. Enterprise Access Management

Budget Controls

Set and manage spending limits across teams and departments. Control costs with granular budget limits and usage tracking.

Single Sign-On (SSO)

Enterprise-grade SSO integration with support for SAML 2.0, Okta, Azure AD, and custom providers for secure authentication.

Organization Management

Hierarchical organization structure with workspaces, teams, and role-based access control for enterprise-scale deployments.

Access Rules & Audit Logs

Comprehensive access control rules and detailed audit logging for security compliance and usage tracking.

6. Reliability Features

Fallbacks

Automatically switch to backup targets if the primary target fails.

Conditional Routing

Route requests to different targets based on specified conditions.

Load Balancing

Distribute requests across multiple targets based on defined weights.

Caching

Enable caching of responses to improve performance and reduce costs.

Smart Retries

Automatic retry handling with exponential backoff for failed requests

Budget Limits

Set and manage budget limits across teams and departments. Control costs with granular budget limits and usage tracking.

7. Advanced Guardrails

Protect your Project’s data and enhance reliability with real-time checks on LLM inputs and outputs. Leverage guardrails to:- Prevent sensitive data leaks

- Enforce compliance with organizational policies

- PII detection and masking

- Content filtering

- Custom security rules

- Data compliance checks

Guardrails

Implement real-time protection for your LLM interactions with automatic detection and filtering of sensitive content, PII, and custom security rules. Enable comprehensive data protection while maintaining compliance with organizational policies.

FAQs

Can I use models other than OpenAI with LiveKit through Portkey?

Can I use models other than OpenAI with LiveKit through Portkey?

Yes! Portkey supports 250+ LLMs. Simply create a virtual key for your preferred provider (Anthropic, Google, Cohere, etc.) and update your config accordingly. The LiveKit OpenAI client will work seamlessly with any provider through Portkey.

How do I track costs for different voice agents?

How do I track costs for different voice agents?

Use metadata tags when creating Portkey headers to segment costs:

- Add

agent_type,department, orcustomer_idtags - View costs filtered by these tags in the Portkey dashboard

- Set up separate virtual keys with budget limits for each use case

What happens if my primary LLM provider goes down?

What happens if my primary LLM provider goes down?

Configure fallbacks in your Portkey config to automatically switch to backup providers. Your LiveKit agents will continue working without any code changes or downtime.

Can I implement custom business logic for my agents?

Can I implement custom business logic for my agents?

Yes! Use Portkey’s hooks and guardrails to:

- Filter sensitive information

- Add custom headers or modify requests

- Implement business-specific validation

- Route requests based on custom logic

How do I migrate existing LiveKit agents to use Portkey?

How do I migrate existing LiveKit agents to use Portkey?

Migration is simple:

- Create virtual keys and configs in Portkey

- Update the OpenAI client initialization to use Portkey’s base URL

- Add Portkey headers with your API key and config

- No other code changes needed!

Next Steps

Ready to build production voice AI?- Join our Discord for support and updates

- Explore more integrations

- Read about advanced configs

- Learn about guardrails

For enterprise support and custom features for your LiveKit deployment, contact our enterprise team.